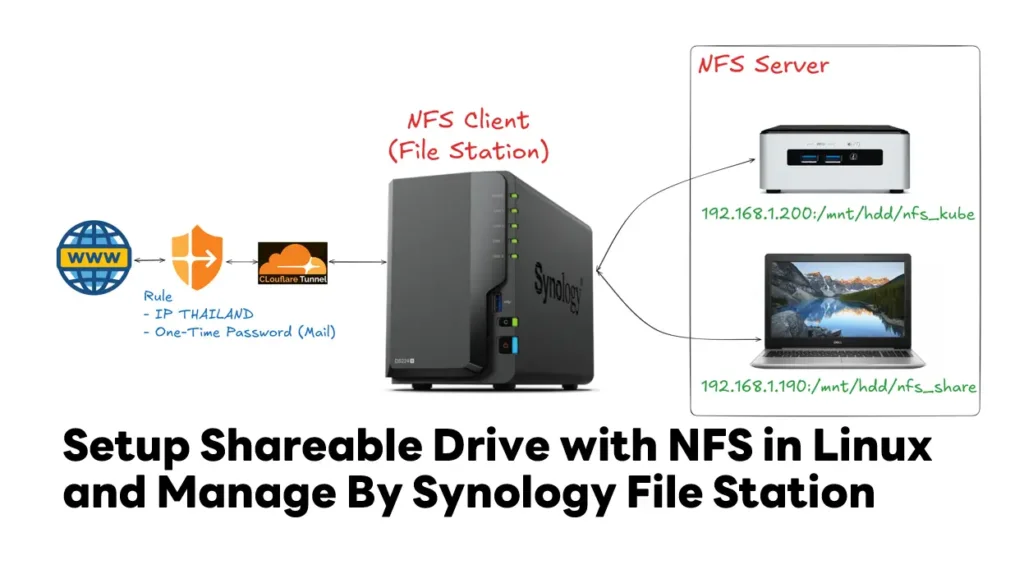

Currently, I have a collection of hardware—a NAS, some old notebooks, and a NUC I bought for work years ago. I wanted to centralize my storage management and access, using my Synology NAS as the core. This led me to research the best way to integrate them, which I’ve now documented in this blog post.

Table of Contents

What & Why is NFS?

NFS stands for Network File System, it is a protocol that allows us to share files and folders over the network. Like NFS there are other protocols like SMB, AFP, etc. that allow us to share files and folders over the network.

While there are many ways to connect devices, I chose NFS as the primary protocol for this project. It’s lightweight and incredibly stable, especially when you have multiple devices like a NUC or Linux-running notebooks hitting the NAS simultaneously. In this section, I’ll walk you through how I configured the NFS permissions on my Synology and why it’s a game-changer for anyone looking to build a robust home lab.

First let’s setup the NFS server

➡️ Install "nfs-kernel-server" to setup the NFS server

sudo apt update -y && sudo apt install nfs-kernel-server -y

➡️ Create a directory which we want to share with the client (Synology NAS) , For example i want to share old notebooks harddrive such as /mnt/hdddrive/nfs_alldata or you can create for folder by purpose such media/nfs_sharemedia

sudo mkdir -p /mnt/hdddrive/nfs_alldata

➡️ if want to make sure that the client machine can access this directory and add files to it. so we give the ownership of this directory to the nobody user and group.

sudo chown nobody:nogroup /mnt/hdddrive/nfs_alldata

➡️ Now we need to give the read and write permissions to this directory.

sudo chmod 777 /mnt/hdddrive/nfs_alldata

While giving full permissions might seem counterintuitive for security,

- doing this in tandem with nobody:nogroup ownership is a common practice for NFS shares. It eliminates the 'Permission Denied' headaches caused by mismatched User IDs (UIDs) between the NUC and the NAS.

- Since the NFS server maps incoming traffic to the 'nobody' user, this setup ensures smooth read/write access while still restricting those users from affecting critical system files outside the shared directory.

➡️ Next, configure the NFS server to share this directory with the client machine.

sudo vi /etc/exports # or using nano sudo nano /etc/exports

➡️ Add the following line to the file to config share

- Allow only client with ip 192.168.0.128 to access with rw (read/write) / sync (ensure data integrity + write to Disk finish) / no_subtree_check is added to improve reliability when files are being renamed or moved within the share."

/mnt/hdddrive/nfs_alldata 192.168.0.128(rw,sync,no_subtree_check)

- You can also use the * wildcard to allow all the machines in your network to access the shareable drive.

/mnt/hdddrive/nfs_alldata *(rw,sync,no_subtree_check)

➡️ export the configurations that with config /etc/exports file to make it accessible to the client machine(s). by execute command

sudo exportfs -a

This command tells the NFS server to re-read the exports file and update its list of shared directories. It's a clean way to apply changes instantly without interrupting any active connections or restarting the entire NFS service."

➡️ restart the NFS server to make sure that the changes are applied.

sudo systemctl restart nfs-kernel-server # Additionally enable the NFS server to start automatically on boot. sudo systemctl enable nfs-kernel-server

Check NFS server Firewall + Config For Client

➡️ Check NFS server version (v3 / v4) and protocal (TCP/UDP) by using command

rpcinfo -p

➡️ sample result tell NFS server is V3/V4 and using protocal tcp on port 2049 / 111 (on Dedian default is NFS server V4 - TCP)

adminping@lab-inspiron5570:~$ rpcinfo -p

program vers proto port service

100000 4 tcp 111 portmapper

100000 3 tcp 111 portmapper

100000 2 tcp 111 portmapper

100000 4 udp 111 portmapper

100000 3 udp 111 portmapper

100000 2 udp 111 portmapper

100024 1 udp 36328 status

100024 1 tcp 35171 status

100005 1 udp 57399 mountd

100005 1 tcp 42891 mountd

100005 2 udp 52564 mountd

100005 2 tcp 35791 mountd

100005 3 udp 44684 mountd

100005 3 tcp 56505 mountd

100003 3 tcp 2049 nfs

100003 4 tcp 2049 nfs

100227 3 tcp 2049 nfs_acl

100021 1 udp 36862 nlockmgr

100021 3 udp 36862 nlockmgr

100021 4 udp 36862 nlockmgr

100021 1 tcp 44669 nlockmgr

100021 3 tcp 44669 nlockmgr

100021 4 tcp 44669 nlockmgr

➡️ Let's tell firewall to add incoming rule for port 2049 / 111 on same subnet

sudo ufw allow from 192.168.1.0/24 to any port 2049 sudo ufw allow from 192.168.1.0/24 to any port 111

➡️ check firewall is active

sudo ufw status verbose

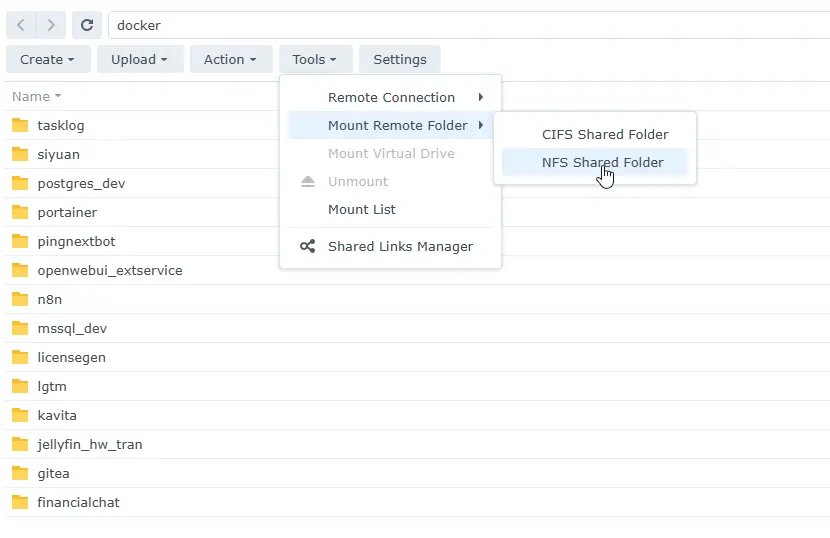

On Synology File Station Mount a remote NFS shared folder:

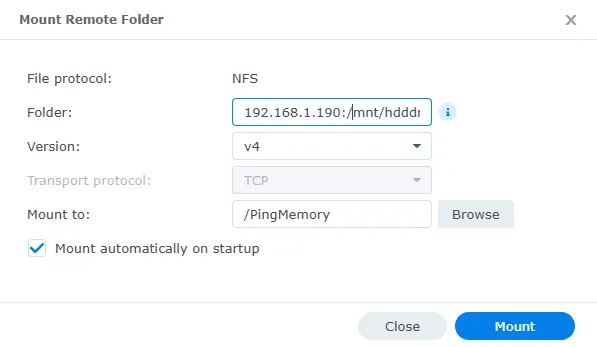

➡️ Click Tools > Mount Remote Folder > NFS Shared Folder.

➡️ In the Folder field, specify the remote folder path in the format of Remote_Server:/Remote_Folder_Path, such as 192.168.1.190:/mnt/hdddrive/nfs_alldata

➡️ Select the desired NFS version and transport protocol.

➡️ Click Browse to select or create an empty destination folder on your Synology NAS for mounting the remote folder.

Tips the Mount automatically on startup checkbox if you want your Synology NAS to mount this remote folder on every system startup or reboot.

➡️ Click Mount to have the remote folder mounted to the destination folder. You can now navigate and manage the remote folder from your Synology NAS. Folder Icon is network Drive

Reference

Discover more from naiwaen@DebuggingSoft

Subscribe to get the latest posts sent to your email.